What Is ISO in Photography? Here's a Technical Explanation

Most people get the gist of how to use ISO ratings in their photography, but what are they? Where do these numbers come from, and what's difference between ISO in film and digital? In this tutorial we explore the history and technical underpinnings of the system. If you've ever wondered what ISO means or how it works, this one's for you!

The History

In photography, "ISO" means the standard system of measurement for how much light sensitivity a photographic film or sensor has. We can control the ISO to create an image that is correctly exposed: not too light and not too dark.

ISO stands for the International Organisation for Standardisation, a global body who work to standardize all kinds of products and processes for maximum interoperability and safety. In 1974, the ISO took the most recent advances in the German DIN and American ASA (now ANSI) systems and worked them into a single universal standard for film: ISO numbers. When digital sensors came out, manufacturers eventually adopted the same standard numbers.

The previous two systems, DIN and ASA, stretched back to the 1930s and 40s, before which various ratings systems coexisted from different manufacturers and engineers.

How Sensitivity Was Measured and Numbers Decided

What do the numbers themselves mean? There are four ISO standards, one each for color negative film, black and white negative film, colour reversal (slide) film and digital sensors. These are calibrated so that regardless of the type of film or medium, the effective sensitivity is the same. This is very useful for practical purposes while shooting as it allows the photographer more, and faster, control over exposure.

However, the differences in emulsion and interpretations of measurement processes across manufacturers, factories and even batches, as well as the inherent variability of a chemical process, means that even with standardisation, results can vary. In the field, photographers have found that, for some films, setting cameras to slightly different ISO ratings than a particular film's nominal speed can give certain desirable results.

Sensitometry, Densitometry

Film speed is been measured from a "characteristic curve," which describes a film's general tonal performance. Here's how it works:

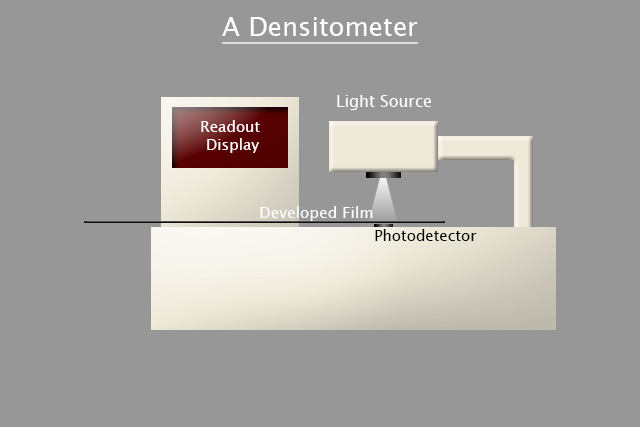

The tonal curve is created using a "sensitometric tablet," a special piece of glass consisting of a precisely calibrated array of 21 equally-spaced (from black to white) shades of grey. The graduated shades of grey are exposed onto the film. After processing, the graduated exposure of the emulsion can be read using a calibrated densitometer, a machine that reads the actual density of film.

The 21 steps are then each measured accurately, and once all 21 steps have been measured, they are plotted on a graph in millilux-seconds.

This graph has various parts which explain various aspects of how the film performed, such as fogging, gamma, contrast, etc. The part we're interested in for the ISO speed rating of the film is from 0.1 density units above the minimum density, let's call this point x. This value isn't particularly scientific, but is traditionally accepted as the minimum difference in density that the average human eye can differentiate.

The equation for film speed (yes, there is one) is speed = {800\over{log^{-1} (x)}}. If the exposure is measured in lux-seconds rather than millilux-seconds, this becomes: speed = {0.8\over{log^{-1} (x)}} Note that I write log for base-10, not ln for natural log (base-e).

The important part is that, generally, as the speed doubles or halves, so too does the sensitivity to light.

How Sensitivity Changes in Film

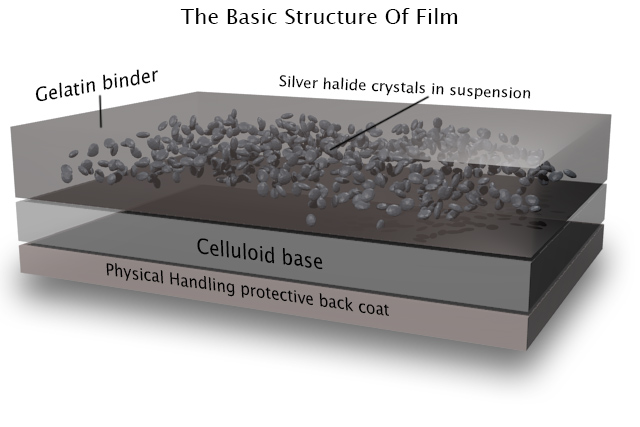

Film is made of a suspension of silver halide crystals in a gelatin binder. This emulsion is finely layered many times along with any dyes for color or processing agents onto a celluloid base, protected on the back side with physical handling coatings. The silver halide crystals are the actual photoreactive medium.

They are only reactive to the blue end of the visible light spectrum (hence the need for UV filters when shooting film), they're coated or impregnated during growth with organic compounds which sensitize them to the full visible spectrum.

Photons hitting the silver pass on their energy into the molecule. This causes an electron to be ejected from a halide ion in the silver halide crystal. This can be trapped by a silver ion to form an electrically neutral silver atom.

This is not stable, however. More photoelectrons must be available in the same region to form more silver atoms in order for a stable cluster of at least three or four silver atoms to be formed. Otherwise, they can easily decompose back into silver ions and free electrons. More silver atoms can form as long as photoelectrons are being generated.

After exposure, but before processing, your film has a latent image: no image exists yet, but if we dunk it into the right chemicals we can make one.

In processing, an atom cluster of pure silver of the stable size I described above will catalyse the reaction with the developer, which then decomposes the whole crystal into a metallic silver grain, which appears black due to its size and unpolished surface.

The fixer then fixes the image by dissolving the remaining silver halide salt crystals, which are then rinsed away (and hopefully stored for recycling). This has been the general basis of photography for over a century. So what does this have to do with the sensitivity of film?

The answer to that is really quite simple: probability. The larger the silver halide crystals, the more likely it is that photons will hit them and be absorbed. To use a basic analogy, if you wave a large butterfly net through a large swarm of butterflies, you're likely to catch more of them than with the same wave through the same swarm with a small net.

Larger crystals have a greater surface area facing the lens, and logically, light sensitivity directly correlates with the likelihood of light hitting the surface.

Thus slow films like ISO 25, 50 and 100 have very fine grains to reduce the amount of light hitting them, useful for capturing fine detail. Conversely, very fast films like ISO 1600 and 3200 have relatively huge grains for the maximum possible chance of capturing photons, hence their extremely grainy quality.

How Sensitivity Works in Digital Imaging

Digital cameras, having no chemical process, cannot be measured using the same method as film. The ISO ratings system, however, is designed to be reasonably similar to film in terms of actual light sensitivity. Technically the term for digital sensors is "Exposure Index" rather than "ISO," but because an ISO standard covers it, I see no issue using the more traditional "ISO." Practically speaking, most of the world agrees.

Instead of a minimum visible exposure level, digital sensors have their sensitivity determined by the exposure required to produce a predetermined characteristic signal output. The ISO standard governing sensor sensitivity, ISO 12232:2006, relates five possible methods to determine sensor speed, although only two of them are regularly used.

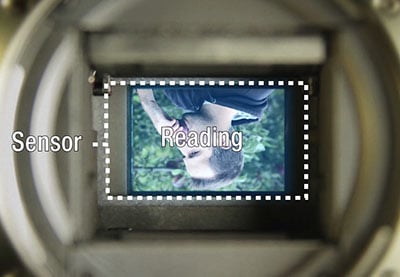

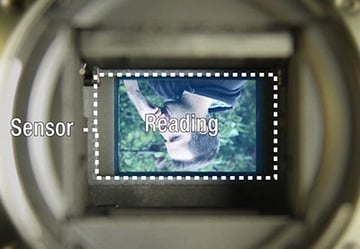

A camera's sensor consists of a matrix of millions of microscopic photodiodes, usually covered with microlenses for extra light-gathering and a Bayer pattern filter in order to capture color. Each one represents a single pixel.

A photodiode can be run in either zero-bias (no applied voltage) photovoltaic mode, where output current is restricted and internal capacitance is maximised, resulting in a photoelectron build-up on the output.

It can also be run in reverse-biased (run backwards) photoconductive mode, where photons absorbed into the p-n junction release a photoelectron that directly contributes to the current flowing through the diode.

Camera sensors use the latter, as the voltage applied to reverse bias the diode both increases the ability to collect photons by widening the depletion region and reduces the likelihood of recombination due to the increased electric field strength pulling the charge carriers apart.

Suddenly lost? Let's go over the operation of the photodiodes that make up the sensor in your camera.

A (Somewhat) Basic Interlude on Photodiodes

In plain language, when light hits your sensor, it excites the material. This excitement causes a tiny electric charge to flow from one part of the sensor to another. When it does, we can measure that and turn it pulse into a signal, which we can then turn into an image.

Here it is again in the technical specifics:

A photodiode is essentially a normal semiconductor diode, a device which allows the flow of electrical current in one direction only, with the p-n junction exposed to light. This allows photoelectrons to impact the electronic operation of the device, ie. it makes the sensor light sensitive.

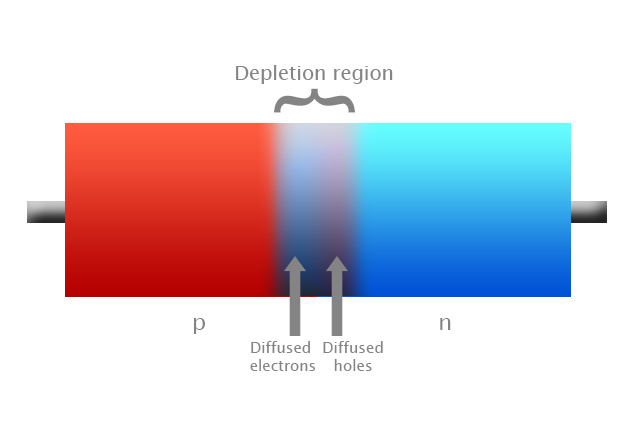

A p-n junction is a piece of positively-doped semiconductor fused with a piece of negatively-doped semiconductor. Doping is infusing impurities which donate or accept electrons in order to alter the availability and polarity of charge in a piece of semiconductor. This selective manipulation of charge is the basis of all electronics.

Close to the junction point in the semiconductor, the electrons on the negative-doped side are attracted to, and tend to diffuse into, the postive-doped side. There are holes without electrons within the semiconductor lattice, resulting in a net positive charge. Holes are treated as positively-charged particles for general purposes. These equally have a tendency to diffuse into the negative-doped side.

However, once enough mobile charge carriers (the electrons and holes) have accumulated in each side, there is enough charge there to generate an electric field which tends to repel more charge carriers from diffusing. A charge equilibrium is reached. The diffusing carriers are equal to the repelled carriers in each direction.

This equilibrated area near the junction is what's called a depletion region, where there's a cloud of electrons on the positive-doped side of the junction, and a cloud of holes on the negative-doped side. The carriers have been depleted from their original positions, and have created a charge difference, resulting in an electric field, ie. built-in voltage potential. This is the basis for a diode. A photodiode is essentially the same thing, but with a transparent window to allow photons to hit the depletion region.

Reverse-biasing the diode widens the depletion region by overcoming the natural charge equilibrium of the depletion region and setting a new one, where the innate electric field must now be strong enough to oppose both the attraction, diffusion and also the applied electric field. This, of course, requires a larger depletion region containing more charge to generate a stronger field.

When a photon of sufficient energy hits and gets absorbed by the semiconductor lattice, it generates an electron-hole pair. An electron gains enough energy to escape the atomic bonding of the lattice and leaves behind a hole. Recombination can occur immediately, but largely what happens is that the electron gets pulled in the direction of the negative-doped region and the hole towards the postive-doped region.

Often they can recombine with other charge carriers in the semiconductor, but ideally, with optimised transit distance from the photosite to the electrode collector (short enough to avoid recombination, but long enough to maximise photon absorption) the carriers will reach the electrode and contribute to the photocurrent to the read-out circuit.

Long story short, the more photons are absorbed the more charge carriers make it to the electrodes, and the higher the current read-out sent to the camera's analog-to-digital converter. The higher the current, the higher the exposure being received and the brighter the pixel.

Digital ISO

As I mentioned above, ISO is often measured using the exposure required to saturate the photosites. I just explained what the photosites are; the depletion region within the photodiodes. So how do they become saturated? Well, the number of electrons available for photons to excite is not unlimited. After a certain amount of light energy is absorbed, the semiconductor has released as much charge to the electrodes as it can, and no longer responds to further exposure.

Photographically, this is the full-well capacity, or highlight clipping point. Usually manufacturers deliberately mis-rate their sensors in order to retain headroom in the highlights, allowing highlight recovery in RAW.

Saturation-based Speed Test

According to ISO 12232, the equation to define saturation-based speed is S_{sat} = {78\over{H_{sat}}} where H_{sat} = L_{sat} t. L_{sat} is the required illuminance for a given exposure time to reach sensor saturation. The 78 is chosen such that an 18% grey surface will appear exactly 12.7% white.

This allows highlight headroom in the final rating for specular highlights to roll off naturally and not as blocky dots. This rating is most useful for studio photography where illumination is precisely controlled and maximum information is required.

Noise-based Speed Test

The ISO defines another rating test which is lesser-used but is more useful for real-world scenarios, which is the noise-based speed test.

This is a rather subjective test, as the image quality and test criteria are somewhat arbitrary; the signal-to-noise (S/N) ratios used are 40:1 for "excellent" IQ and 10:1 for "acceptable" IQ, based upon viewing a 180dpi print from 25cm away. The S/N ratio is defined as the standard deviation of a weighted average of the luminance and chrominance values of multiple individual pixels in the frame.

Standard deviation is a way of mathematically deriving the variation in values in collected data from the average or expected value. It's the sum of all the differences squared, divided by the number of data points in the set, square rooted. Essentially, an average of the deviations.

Photographically, what this means that the test pixels are averaged out to find the "expected" value of the light signal. Then the standard deviation defines how far away the individual test pixels tend to be from this average. Assuming the pixels are relatively uniform in value, this deviation from the average is noise, either from the sensor or the processing electronics.

Noise

The ratio between the average value (signal) and the standard deviation (noise) is the S/N ratio. The higher this ratio, the less noise there is in the signal. For example, for the "excellent" image quality standard of 40:1, this means that on average, for every 40 bits of image signal, there's only one of noise. The huge difference between the image and the noise is what creates the clean image.

Noise can be introduced in several ways: saturation/dark current across the photodiodes, random thermally-released electrons in the photodiodes or processing electronics (thermal noise), charge carrier movement across the depletion region of the photodiodes (shot noise), and imperfections in crystal structure or contaminants which result in random captures and releases of electrons (flicker noise).

The increase in noise from increasing the ISO setting on the camera is a result of increasing the gain of the pre-amplifiers between the sensor and A/D converter. The S/N ratio is necessarily reduced, as in order to produce a "correct" exposure with high amplification, there must be less exposure. Less exposure means less signal, thus relatively greater noise as a fraction of that reduced level.

A simple mathematical example; say at ISO 100, a correct exposure is achieved by filling a particular pixel to 80% well capacity, and its S/N ratio is 40:1, so +/-2% of the current readout is noise-induced. Boosting the ISO to 800 means that the amplifiers are boosting the signal by 8x, and thus the correct exposure is reached at only 10% well capacity. The +/-2% noise level, however, remains about the same and gets amplified right along with the signal level. Now that 40:1 S/N ratio has become a 5:1 ratio, and the image is useless.

Conclusion

You can see why it's important to shoot with as much exposure and as little amplification as possible. Circuitry and sensor technology, as well as denoising algorithms, are constantly improving; just think about the difference between an ISO 800 shot from 2008 vs an ISO 800 shot from today. The majority of images are also now viewed at relatively small sizes online, and resizing also reduces noise.

For large format printing purposes, though, you can see why it's vital to shoot with lots of light and at base ISO. Hence also the maxim "expose to the right," meaning get the image as bright as possible on the histogram without clipping highlights. Not only does that maximise the amount of light signal compared to the reasonably fixed noise level of the imaging electronics, but the way the data is digitised means that more information can be stored in the highlights than in the shadows.

That's about it, I think. I hope this article was of interest, possibly even use, to some of you, and that you didn't get too lost in the technicalities of solid-state physics!

Comments? Questions? Hit up the comments below!

How to Use a Hand-Held Light Meter to Make Perfectly Exposed Photographs

How to Use a Hand-Held Light Meter to Make Perfectly Exposed Photographs

Jeffrey Opp06 Jun 2015

Jeffrey Opp06 Jun 2015

Get a Better Video Image: Learn How to Set Exposure

Get a Better Video Image: Learn How to Set Exposure

David Bode30 Mar 2017

David Bode30 Mar 2017